The safest way to use Claude and ChatGPT as a healthcare professional is to treat them as drafting, summarizing, and communication assistants, not as independent clinical decision-makers. That means using them for low-risk or reviewable work such as patient-education rewrites, literature summaries, inbox drafting, policy comparison, and documentation support, while keeping diagnosis, orders, and final patient-facing decisions under licensed human review.

This article is for clinicians, healthcare administrators, and clinical educators who want a practical workflow instead of vague AI hype. The core rule is simple: privacy first, low-risk tasks second, human sign-off always. That rule matches current guidance from OpenAI, Anthropic, the U.S. Food and Drug Administration (FDA), and the American Medical Association (AMA), all of which point toward governance, risk controls, and human accountability rather than blind automation (OpenAI, Anthropic, FDA, AMA).

Key Takeaways

- Claude and ChatGPT are most useful in healthcare for documentation support, patient communication, research synthesis, training, and administrative drafting.

- Do not paste patient-identifiable information into a consumer AI workspace that is not approved by your organization.

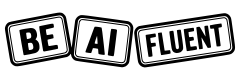

- As of April 22, 2026, OpenAI distinguishes between consumer health use and enterprise healthcare deployments, and Anthropic distinguishes between BAA-covered services and standard chat surfaces (OpenAI, Anthropic).

- The best healthcare AI workflow is

approved workspace -> bounded task -> source material -> draft -> clinician review -> final use. - If a task affects diagnosis, medication, triage, or compliance outcomes, AI should support preparation and review, not make the final call.

Table of Contents

- Where Claude and ChatGPT actually help in healthcare

- The privacy line: consumer chat versus approved healthcare workspace

- How to use Claude and ChatGPT in healthcare in 7 steps

- Best use cases for clinicians and healthcare teams

- A simple tool-selection framework: when Claude, when ChatGPT, when neither

- Prompt examples for healthcare professionals

- Common mistakes healthcare teams make with AI

- FAQ

Where Claude and ChatGPT Actually Help in Healthcare

Healthcare work has the exact mix of pressures that make language models attractive: too much documentation, too many messages, too much information to synthesize, and too little time. The opportunity is real, but so is the risk.

The AMA’s 2024 physician survey found that 66% of physicians had used AI in some form, while 68% said they saw at least some advantage in AI-enabled tools and 57% said the biggest opportunity was reducing administrative burden through automation (AMA). That is the right starting frame. The strongest early wins are usually not “AI diagnoses patients.” They are “AI helps clinicians communicate, summarize, and document faster.”

OpenAI’s healthcare help guidance makes a similar distinction. Its health-related offerings are described for wellness, health information, care navigation, and enterprise workflows, with separate handling for regulated healthcare deployments and sales-managed agreements (OpenAI). Anthropic makes the same split on its side by separating standard Claude surfaces from BAA-covered commercial services (Anthropic).

That is why the best healthcare use cases tend to look like this:

| Task | Why AI helps | Main risk | Human owner |

|---|---|---|---|

| Summarizing clinical literature | Cuts reading time and surfaces themes | Missed nuance or unsupported synthesis | Clinician or researcher verifies the paper |

| Rewriting patient instructions into plain language | Improves readability and translation scaffolding | Oversimplification or unsafe omission | Clinician approves final wording |

| Drafting inbox or portal responses | Speeds routine communication | Tone drift or unsafe advice | Licensed reviewer signs off |

| Turning long notes into structured outlines | Reduces admin burden | Missing context or false certainty | Author validates content |

| Comparing policy or protocol drafts | Finds differences quickly | Outdated policy assumptions | Compliance or clinical lead approves |

| Training and education support | Generates cases, quizzes, summaries | Incorrect teaching points | Educator reviews accuracy |

In healthcare, the safest question is rarely “Can AI answer this?” It is “Can a licensed human quickly verify and own this output before it affects care?”

If you want the broader trust framework behind that rule, Are AI Tools Accurate? and What Generative AI Can and Cannot Do are the right companion reads.

The Privacy Line: Consumer Chat Versus Approved Healthcare Workspace

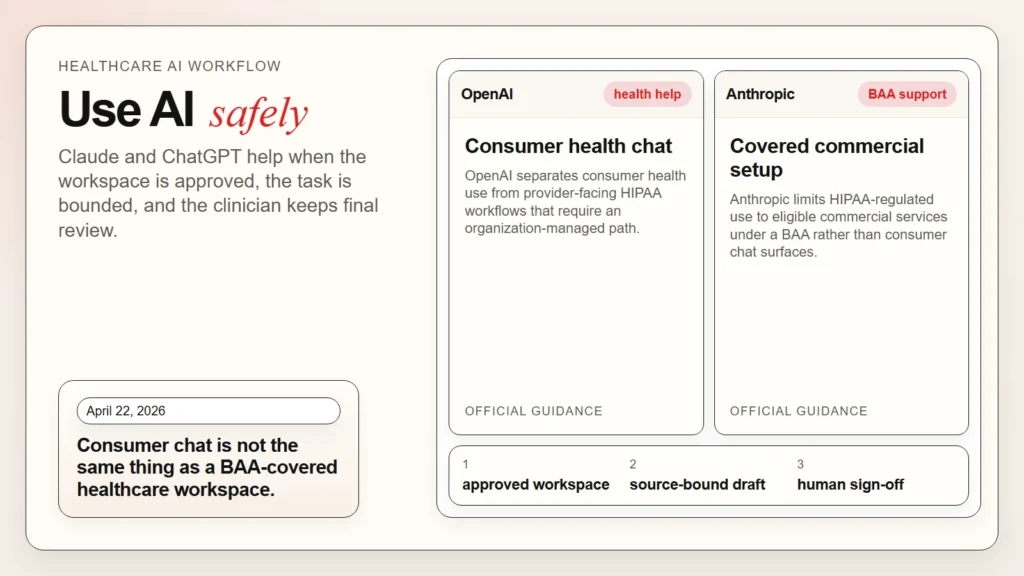

This is the most important section in the article because many healthcare AI failures start here. People talk about “using ChatGPT” or “using Claude” as if every plan and workspace has the same privacy posture. They do not.

As of April 22, 2026, OpenAI’s health help article says ChatGPT Health is intended for consumers managing their own health and wellness and explicitly says it does not support HIPAA-compliant use for healthcare providers or covered entities. The same article says ChatGPT for Healthcare supports healthcare organizations through a sales-managed plan with a Business Associate Agreement (BAA) and points to a separate BAA process (OpenAI, OpenAI BAA).

Anthropic’s commercial BAA article is equally specific. It says that under a BAA, eligible commercial customers may use Anthropic API, Claude Enterprise, and Claude Team for HIPAA-regulated workloads, but it also says consumer services such as claude.ai, mobile apps, Claude Code, and Claude for Work are not BAA-covered. It also notes that chat retention, projects, artifacts, and conversation export are not covered in BAA-enabled workspaces (Anthropic).

Here is the operational takeaway:

| Workspace type | Can you assume it is safe for PHI? | Practical rule |

|---|---|---|

| Personal or consumer AI chat account | No | Do not enter patient-identifiable information |

| Organization-managed healthcare deployment with signed BAA | Only if your compliance team approved the exact setup | Follow local policy and covered-feature limits |

| Pilot or sandbox without governance review | No | Use de-identified or synthetic cases only |

| Secure enterprise workspace for admin drafting | Only for approved data classes | Match the task to the approved data policy |

That distinction matters because the FDA continues to frame AI-enabled medical software around risk, intended use, validation, and oversight rather than around “AI” as a single category (FDA). A compliant healthcare AI workflow is not just about model quality. It is about the exact product tier, retention settings, covered features, organization policy, and human review path.

A quick privacy checklist before you use either tool

- Is this the exact Claude or ChatGPT workspace approved by your organization?

- Does your compliance or security team allow this data type in this workspace?

- Are retention, export, and connected features configured the way policy requires?

- Can the task be completed with de-identified, synthetic, or abstracted data instead?

- Will a licensed human review the result before it affects patient care?

If any answer is unclear, stop there. Use de-identified information only, or do not use the tool for that task.

Caption: The safe question is not just which model you use. It is which exact workspace, BAA status, and covered features your organization approved.

Caption: The safe question is not just which model you use. It is which exact workspace, BAA status, and covered features your organization approved.

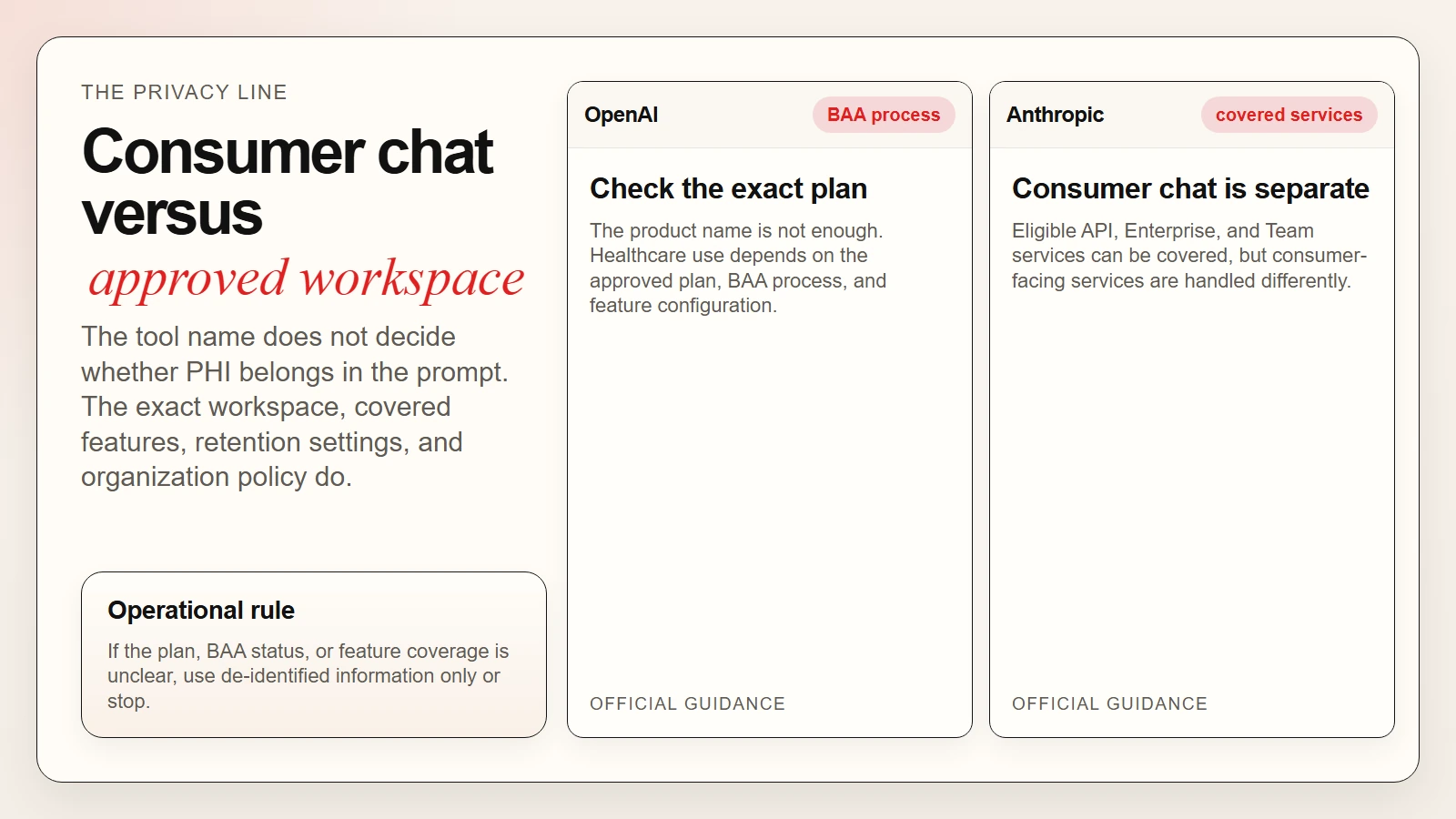

How to Use Claude and ChatGPT in Healthcare in 7 Steps

The best healthcare AI workflow is boring on purpose. It reduces avoidable risk and makes the review burden clear.

Step 1. Pick a bounded task

Start with a task where the output is easy to inspect.

Good starting examples:

- Rewrite discharge instructions into plain-language reading level.

- Summarize a journal article into key practice implications.

- Draft a response to a routine non-urgent patient portal message.

- Turn meeting notes into action items for the care team.

- Compare two protocol drafts and list the differences.

Bad starting examples:

- Decide what diagnosis is most likely.

- Recommend medication or dosage changes.

- Triage a patient independently.

- Generate final chart content without review.

- Interpret tests as the final authority.

Step 2. Use the right workspace first

Before you write the prompt, confirm the workspace is the approved one. This sounds obvious, but it is where many real-world failures happen. If you are in a personal account, treat the tool like a public drafting assistant and keep patient details out.

Step 3. Separate source material from instructions

Use three buckets:

- Source material: the note, paper, policy, or draft you want help with

- Constraints: reading level, tone, formatting, must-include warnings, citation requirement

- Task instruction: what you want the model to produce

That separation makes it easier to review what came from evidence and what came from generation. It also lines up with the larger AI governance principle that healthcare organizations should make AI outputs inspectable and attributable, not opaque.

Step 4. Ask for a draft, not a final decision

The wording matters. Ask the model to:

- summarize

- rewrite

- organize

- compare

- draft

- flag uncertainty

Do not ask it to:

- diagnose

- approve

- clear

- prescribe

- decide independently

Step 5. Force the model to show uncertainty

One of the simplest safety improvements is telling the model to mark uncertainty clearly. For example:

If the source does not support a claim, say “uncertain” or “source needed” instead of filling the gap.

That is much safer than getting a smooth but invented sentence.

Step 6. Review in a domain-specific pass

For healthcare work, a good review pass checks:

- clinical accuracy

- patient safety

- policy alignment

- privacy exposure

- readability for the intended audience

- missing caveats or escalation instructions

Step 7. Save the workflow, not just the answer

The real productivity gain comes from repeatable templates. If one prompt works for patient instruction rewrites, literature summaries, or portal message drafts, save that process. That is how teams move from random prompting to a real AI workflow.

Caption: The best healthcare AI workflow is narrow by design: approved workspace, source-bound draft, visible uncertainty, and human sign-off.

Caption: The best healthcare AI workflow is narrow by design: approved workspace, source-bound draft, visible uncertainty, and human sign-off.

Best Use Cases for Clinicians and Healthcare Teams

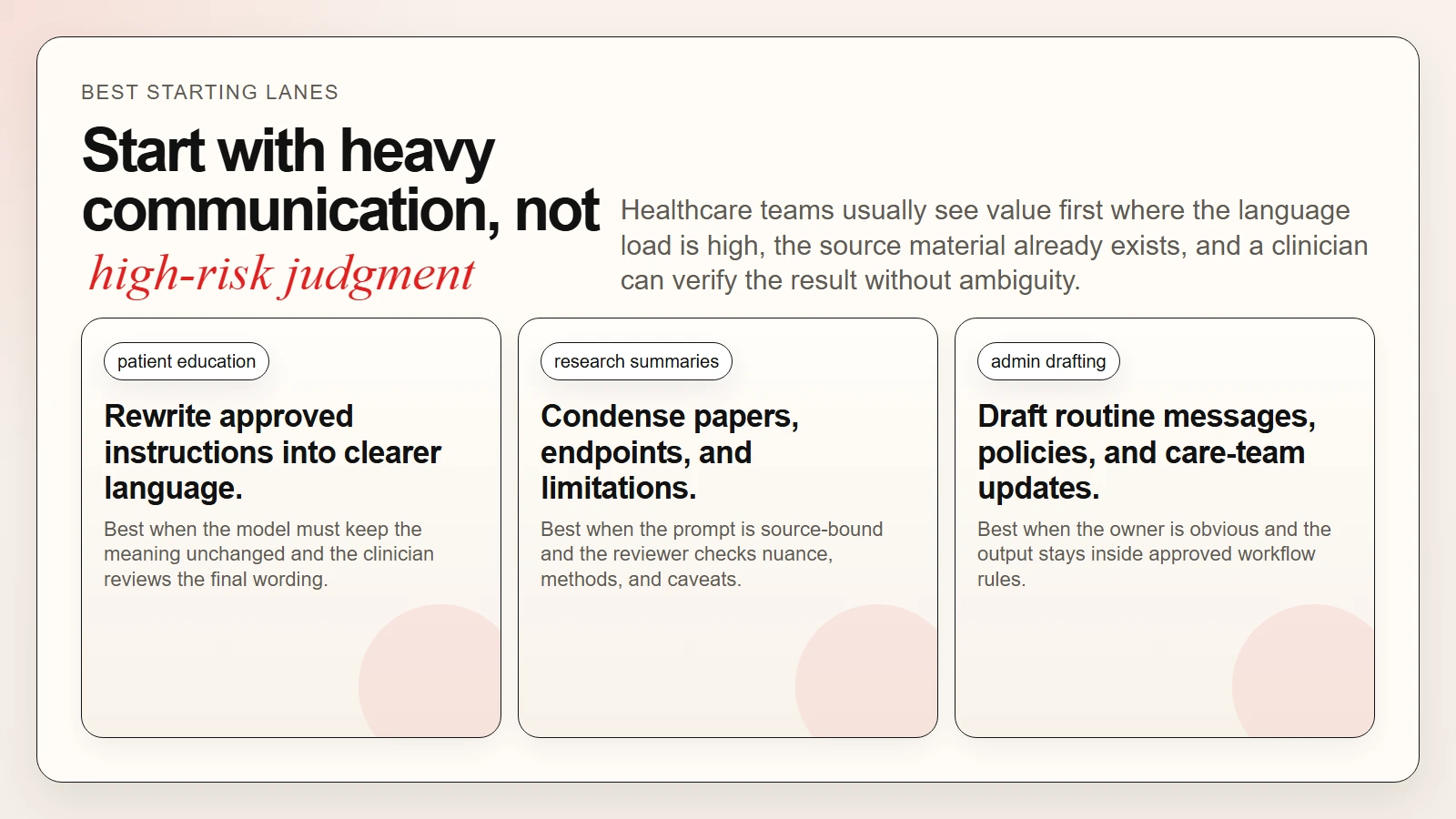

The best use cases are the ones that combine high communication load with strong human review. Start there.

1. Patient education and plain-language rewriting

Many clinical materials are too dense for the average patient. AI can help rewrite them into clearer language while preserving the meaning for clinician review.

Useful pattern:

- start from your approved clinical content

- ask the model to rewrite at a target reading level

- require a “what changed” summary

- have a clinician approve the final version

This is usually safer than asking AI to generate fresh medical advice from scratch.

2. Research and guideline summaries

Claude and ChatGPT can both help summarize a paper, organize the study design, surface endpoints, and compare findings across sources. The risk is that the summary can flatten nuance or overstate a conclusion.

That is why the better prompt is:

Summarize only what is supported by the abstract and methods provided. Separate findings, limitations, and open questions.

If research-heavy workflows are part of your role, How to Use AI Workflows for Research, Notes, Meetings, and Planning is a useful companion because it gives you a repeatable structure for collecting sources, shaping prompts, and reviewing outputs.

3. Administrative drafting and care-team communication

This is where a lot of ROI shows up first. AI can help with:

- meeting summaries

- staffing update drafts

- policy memo drafts

- denial or prior-authorization support letters

- education handouts

- non-urgent message templates

The task stays reviewable, the language burden is high, and the human owner is obvious.

4. Protocol comparison and policy cleanup

Healthcare organizations live in version control chaos: old SOPs, revised policies, regulatory updates, committee edits. AI is useful for comparing versions and surfacing differences quickly.

| Policy task | Good AI output | Human review question |

|---|---|---|

| Compare v1 and v2 of a protocol | List changed sections and missing items | Are the flagged changes clinically and legally complete? |

| Rewrite policy in plainer language | Cleaner, shorter wording | Did any requirement become softer or more ambiguous? |

| Summarize training material | Key takeaways and action points | Did any safety-critical detail get omitted? |

5. Education, simulation, and onboarding

Clinical educators can use AI to draft quiz questions, case stems, onboarding summaries, or training scenarios. This is lower-risk than direct patient care use and often easier to quality-control.

The pattern is the same: use AI to accelerate structure, then let a human subject-matter expert approve final content.

Caption: Start where the communication load is high and the human owner is obvious: patient education, research summaries, and admin drafting.

Caption: Start where the communication load is high and the human owner is obvious: patient education, research summaries, and admin drafting.

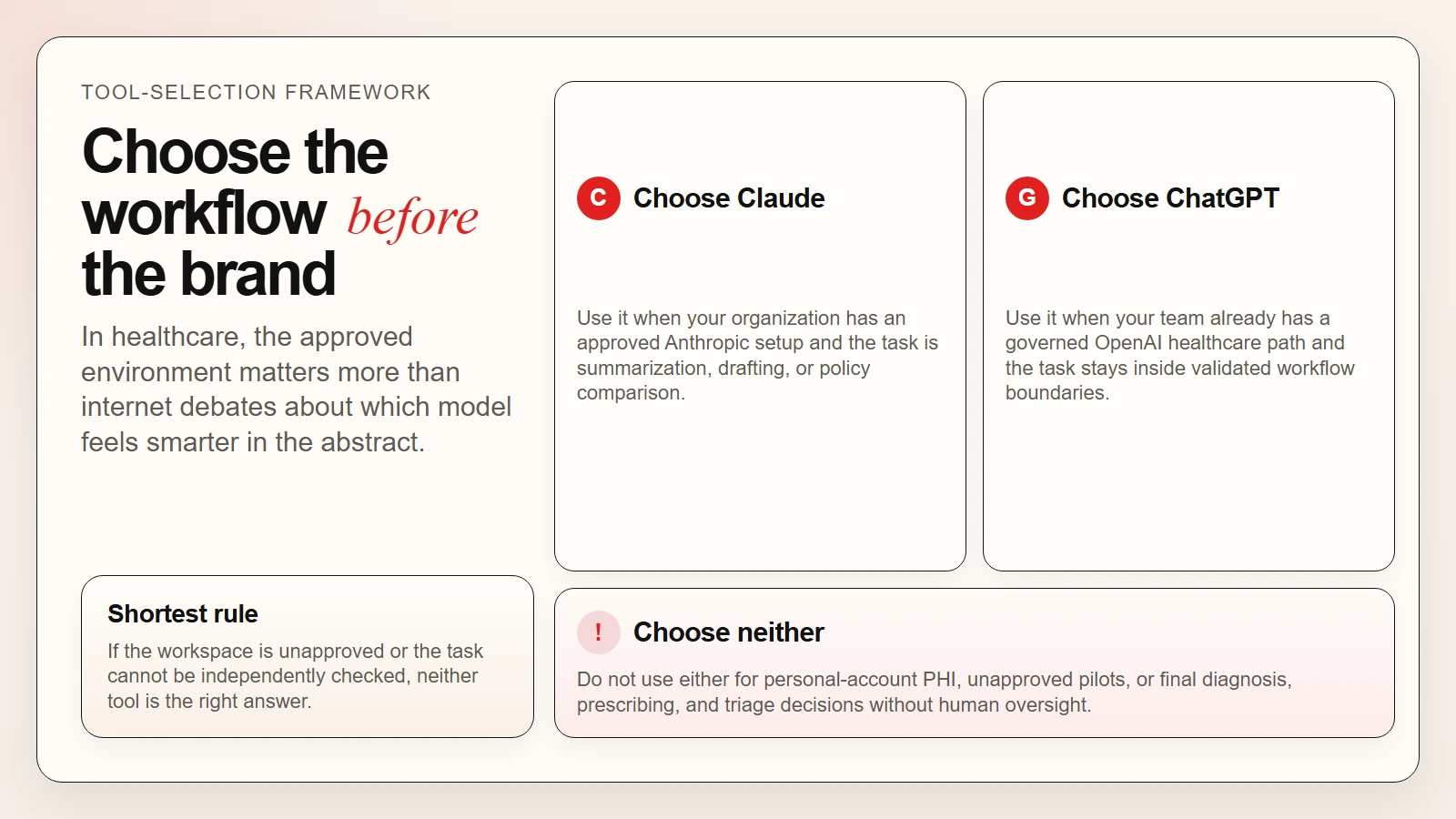

A Simple Tool-Selection Framework: When Claude, When ChatGPT, When Neither

The right choice is usually less about internet brand debates and more about which approved workspace your organization supports.

Choose ChatGPT when

- your organization has a healthcare-approved OpenAI deployment or BAA-covered plan

- your team already uses ChatGPT in a governed workflow

- you need a strong general-purpose drafting and summarization assistant in an approved environment

- you are using OpenAI’s health-specific enterprise pathway rather than a consumer plan

Choose Claude when

- your organization has an Anthropic-approved commercial setup with the right covered services

- your workflow depends on a Claude deployment that compliance has already reviewed

- you are working on summarization, drafting, policy comparison, or internal communication tasks in an approved workspace

Choose neither when

- the workspace is personal, unapproved, or unclear

- the task is high-risk and cannot be independently checked

- you are under time pressure and cannot perform a full human review

- the output would directly drive diagnosis, prescribing, or triage decisions without oversight

Here is the shortest decision table:

| Situation | Claude | ChatGPT | Best answer |

|---|---|---|---|

| Approved enterprise healthcare workflow | Maybe | Maybe | Use the one your organization has validated |

| Personal account with PHI | No | No | Do not use either |

| De-identified admin drafting | Yes, if approved | Yes, if approved | Either can work |

| Final clinical decision-making | No | No | Human clinician decision only |

| Research summary for internal review | Yes, if reviewed | Yes, if reviewed | Either can work with source-based prompts |

If you are still building your foundation, How to Start Using AI as a Complete Beginner and Top AI Tools for Everyday Work and How They Are Being Used are useful companion pieces.

Caption: In healthcare, the validated workflow matters more than model-brand debates. If the environment is unapproved or the task is high-risk, use neither.

Caption: In healthcare, the validated workflow matters more than model-brand debates. If the environment is unapproved or the task is high-risk, use neither.

Prompt Examples for Healthcare Professionals

The safest prompts in healthcare are narrow, source-bound, and review-friendly. They do not ask the model to “practice medicine.” They ask it to make material easier to inspect.

Prompt 1. Rewrite patient instructions more clearly

Rewrite the instructions below for a patient reading at roughly an 8th-grade level.

Requirements:

- keep the medical meaning unchanged

- do not add new medical advice

- keep medication names and dose wording exactly as written

- add a short bullet list called "When to call the clinic"

- if anything is unclear, mark it as uncertain instead of guessing

Source text:

[paste approved instructions]

Prompt 2. Summarize a paper for a clinician

Summarize this study for a busy clinician.

Return:

- study question

- population

- design

- primary outcome

- key findings

- limitations

- what would still need clinical judgment

Only use the text provided. Do not infer results that are not stated.

Source:

[paste abstract or notes]

Prompt 3. Draft a routine non-urgent portal response

Draft a response to this non-urgent patient message.

Requirements:

- keep the tone calm and professional

- do not diagnose

- do not recommend medications beyond the approved reply points below

- include clear follow-up instructions if symptoms worsen

- keep it under 140 words

Approved reply points:

[paste clinic-approved guidance]

Patient message:

[paste de-identified message or approved summary]

Prompt 4. Compare protocol versions

Compare these two protocol drafts.

Return:

- a table of substantive changes

- any changes that could affect safety, documentation, or compliance

- any ambiguous wording that should be reviewed by a clinical lead

Do not judge which version is correct unless the source text explicitly supports it.

Version A:

[paste]

Version B:

[paste]

Common Mistakes Healthcare Teams Make With AI

Most mistakes are workflow mistakes, not model mistakes.

1. Treating the consumer app like the enterprise deployment

This is the biggest one. Product name recognition is not the same thing as governance approval.

2. Asking for judgment instead of drafting help

Once the prompt shifts from “summarize this” to “tell me what to do,” the risk goes up fast.

3. Pasting in raw PHI without checking the workspace

Never assume the default environment is allowed.

4. Letting polished language hide weak evidence

AI can make uncertain information sound organized. That is not the same as making it correct.

5. Skipping the final human review because the output “looks right”

In healthcare, “looks right” is not a standard.

A good healthcare AI workflow reduces clerical burden. A bad one silently moves clinical, legal, and reputational risk upstream into the wrong place.

The long-term skill underneath all of this is AI fluency: knowing how to scope the task, inspect the result, and keep accountability with the human.

FAQ

Can healthcare professionals use ChatGPT for patient care work?

Only within an organization-approved setup and only for tasks that fit that setup’s privacy and governance rules. As of April 22, 2026, OpenAI says ChatGPT Health does not support HIPAA-compliant use for healthcare providers, while ChatGPT for Healthcare is handled through a sales-managed plan with a BAA process (OpenAI, OpenAI BAA).

Can healthcare professionals use Claude with protected health information?

Not by default. Anthropic’s BAA guidance says only certain commercial services are covered under qualifying agreements, and it explicitly excludes several common consumer and chat features. Healthcare teams should follow the exact covered-service list and local compliance approval before using Claude with regulated data (Anthropic).

What are the safest first use cases for Claude and ChatGPT in healthcare?

The safest starting points are low-risk, reviewable tasks such as literature summaries, patient-education rewrites from approved source text, administrative drafting, policy comparison, training materials, and internal meeting summaries.

Should Claude or ChatGPT ever make clinical decisions on their own?

No. These tools can support information handling and drafting, but final diagnosis, prescribing, triage, and treatment decisions should remain under licensed human judgment and established clinical governance.

Which is better for healthcare, Claude or ChatGPT?

The better choice is usually the one your organization has formally approved, configured, and governed for the exact task you need. In many teams, the deciding factors are not model brand or vibe but privacy controls, BAA status, retention settings, supported workflows, and review policy.

Conclusion

If you want to use Claude and ChatGPT well as a healthcare professional, start by rejecting the wrong mental model. These tools are not junior clinicians. They are language and workflow assistants.

Used well, they can reduce administrative friction, improve plain-language communication, speed up literature synthesis, and help teams turn messy information into cleaner drafts. Used badly, they can blur privacy boundaries, overstate certainty, and create clinical or compliance risk that no one meant to take on.

The practical rule is clear: use an approved workspace, pick a bounded task, keep source material visible, require uncertainty markers, and keep final judgment with the human. That is how healthcare teams get real value from Claude and ChatGPT without pretending the tools are safer than they are.

Sources

- OpenAI Help: ChatGPT and OpenAI for Health and Wellness

- OpenAI Help: How can I get a Business Associate Agreement (BAA) with OpenAI?

- Anthropic Support: Business Associate Agreements (BAA) for Commercial Customers

- FDA: Artificial Intelligence-Enabled Device Software Functions

- AMA: Physicians Embrace AI, See Upside in Reducing Administrative Burden

- AMA: Augmented Intelligence in Health Care